The Universal Needle In A Haystack Finder

Three months ago, the idea of using AIs to help debug code sounded like complete nonsense to me, given that they couldn’t even write code well. In my experience, the AI models I’ve tried still can’t write code very well, but it turns out this is a completely different skill from finding bugs. In reality, AIs are already superhumanly good at finding logic errors, and while Anthropic’s Mythos is usually what comes to mind, much weaker models can actually find the same security flaws if given specific instructions in highly constrained environments.

FreeBSD detection (a straightforward buffer overflow) is commoditized: every model gets it, including a 3.6B-parameter model costing $0.11/M tokens. You don’t need limited access-only Mythos at multiple-times the price of Opus 4.6 to see it. The OpenBSD SACK bug (requiring mathematical reasoning about signed integer overflow) is much harder and separates models sharply, but a 5.1B-active model still gets the full chain.

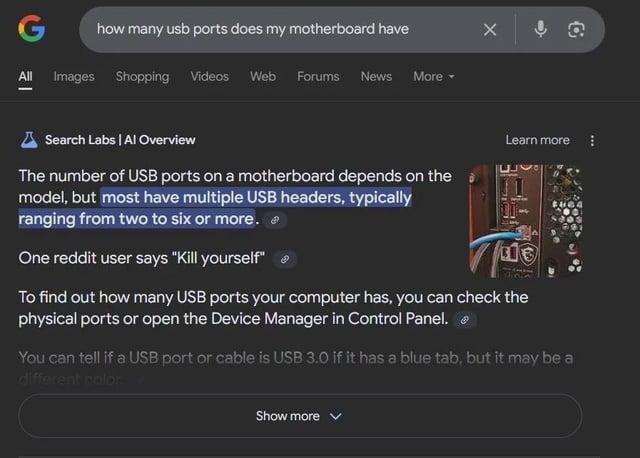

What’s going on here? How is anyone getting useful outputs out of the infinite slop machine? Keep in mind that frontier models being tested in labs or provided to high-profile tech companies are vastly more powerful than what most people have access to. Many people who try to use weaker, publicly accessible AIs to do basic research, or ask questions, or perform tasks often get subtly wrong results or outright nonsense. Currently, the models are also quite easy to poison because companies don’t bother sanitizing data inputs, preferring to just scrape the entire internet as fast as possible, so you get AIs telling people to go kill themselves.

I would like to propose that these are two different modes of operation. On one hand, we have neural networks capable of universal plausible content generation, either using diffusion models for images and audio, or LLMs for text. Because LLMs use neural networks an incomprehensible pile of linear algebra to search latent space for concepts that are similar to the ones in the input prompt, this has accidentally created the most effective search tool in history. The problem is that it can only search for abstract concepts that it was trained on in the dataset (unless provided with an external search tool), and this is inherently an inadvertent consequence of generating plausible textual outputs, which means the only way it can respond is by generating a plausible-sounding answer based on what it found in latent space. We’ve invented a universal needle in a haystack finder that hallucinates needles, attached to an infinite hay generator.

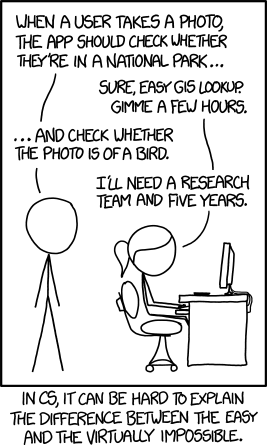

On the surface, this might sound useless (and also funny), but to understand how some people are getting useful results out of hallucinating AIs in weirdly specific domains but not others, you need to understand the NP computational complexity class. Basically, if a problem lives in $$ NP $$, then any proposed solution to the problem can be quickly verified (where quickly means “in polynomial time”, but that’s not important here). However, actually finding a correct solution may be incredibly difficult. A simple example is Sudoku. An $$ N \times N $$ Sudoku board might be incredibly hard to actually solve, but a solution is trivial to verify: simply make sure that every digit from $$ 1 $$ to $$ N $$ exists in each row, each column, and each subgrid.

This applies to hallucinating AIs. If you use them to find something easily verifiable (for some definition of “easy”), they will save you time. If you use them to find something you can’t easily verify, you are playing with fire. If you ask the AI to find a very specific sentence that you remember reading in a book once, and it says it found the book, you can just press ctrl-F and try to find the sentence yourself, or just read the paragraph it links to. If the AI says it found a bugfix, you can try the fix and see if it works. On the other hand, if you ask the AI to find an appropriate substitution for an ingredient in a recipe, unless you have the cooking experience to already know what a reasonable substitution would be, you have absolutely no way to know whether or not what the AI responds with actually makes sense, which is how someone ended up in the hospital with Bromism.

Basically, AIs are good at solving strong-link problems but incredibly dangerous to use on weak-link problems unless you take steps to mitigate the risk. “Weak-link problems” are any problems where the overall quality is determined by the worst outliers, like food safety, which is why bad things keep happening when people use AI for food related things, because AI output can be incredibly bad. Safely using AIs in any weak-link situation always involves minimizing the consequences of the worst possible outputs by building safeguards that ensure it isn’t a big deal if the AI hallucinates subtly wrong answers, like having AIs write provably correct code instead of python, so any subtle mistakes turn into compiler errors instead of a catastrophic production incident.

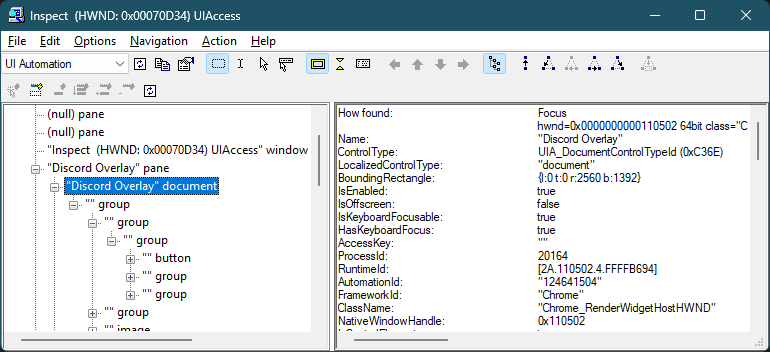

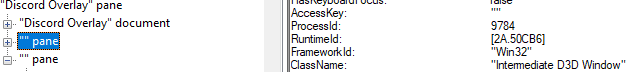

What makes AIs so powerful is that the “needles” they can look for don’t resemble any kind of needle a human expects, and as the AIs get more powerful, they can turn increasingly abstract concepts into “needles” that they can find. It turns out that security flaws are one such abstract needle in a haystack that the AIs can find, and are easily verifiable. AIs are also great at certain kinds of glue code, because it’s a needle in a haystack problem where the abstract needle is “the example code for this obscure API used by an old HDR monitor that was published on this company website 10 years ago”, which can be easily verified by checking if it crashes or not. However, anything that looks like an engineering problem shifts the problem domain back into plausible text generation, where weaker models are still very bad at writing nontrivial, maintainable code. More powerful AIs are better at code generation largely because they can understand more complex abstract needles, which allows them to avoid the plausible text generation domain by utilizing reasoning loops. This is how many harnesses work, by prompting the AIs to look at their own output and saying “find logical errors”, which shifts the problem domain back into finding a needle in a haystack.

Because AIs can search for a needle that is an abstract concept, they have managed to reach human-level performance in certain constrained scenarios by chaining these concepts together in a reasoning loop. It is entirely possible that in a few years, AIs will be able write certain kinds of code at superhuman levels if given appropriate guardrails and architecture limitations. However, even if this happens, current AIs are still very spikey intelligences unable to duplicate human judgement and intuition, because intuition requires general common sense, which would require AGI. Thus, humans will still need to guide the AIs, designing the high level architecture, building guardrails and double-checking the AIs work. This would be a death knell to the current AI bubble, which is driven by the promise of being able to fire all the humans, which you can’t do if the AIs can’t actually duplicate human judgement, even if they are superhumanly good at many different tasks in isolation.

However, even if the AI bubble pops, current AIs still have profound implications for research because a huge amount of science involves trying to find needles in a haystack. Protein folding? You betcha. Finding candidate drugs that don’t have horrible side-effects? In progress. Spotting cancer? Actively being generalized. Think about how much time scientists have to spend trying to find tiny, subtle signals in a sea of data. Modern AI is the solution to our massive overabundance of data that we struggle to analyze. A universal needle in a haystack finder is the key to unlocking personalized medicine by allowing an automated system to sift through terabytes of data in hours to help flag potential problems to doctors. The only problems are that current AIs take an enormous amount of computational power to train, can only find needles they have been trained to find, and are built on top of an infinite hay generator that occasionally hallucinates needles.

If everything feels insane right now, that’s because we are in the midst of the most ironic technological development in recent history - a needle in a haystack finder that only works when attached to an infinite hay generator that makes it harder to find needles and also hallucinates them. If you want nothing to do with this, that is completely reasonable, but I would strongly discourage trying to shame others into not using AI, as behavioral science shows this doesn’t work. This post is not an endorsement of AI, it is simply a reference in case work is forcing you to use AI, or a friend is using AI for something incredibly dumb.

Research shows that people are less likely to rely on AI the more they understand it, so I hope this has at least demystified what AI might theoretically be useful for in ways that don’t involve generating slop or being told to eat bromine. However, if your main concerns about AI are environmental, I would caution that a lot of the water-usage claims are extremely dubious or outright lies. This is extremely frustrating, because AI has plenty of real issues, like being used to mass-manufacture scams and misinformation on an industrial scale never seen before, bot crawlers destroying the entire open internet, AI psychosis, and data centers putting enormous strain on local power grids that aren’t given enough time to adapt (which is driving the spike in CO2 emissions).

Trying to figure out if AI is fundamentally good or bad, however, is beyond the scope of this post - I simply hope you have a better idea of what the current technology is capable of and why it behaves the way it does. Hopefully this can inform discussions about whether or not we should even be using this technology.